Common Voice by Mozilla: The immediate future of human-machine interaction lies in voice control with smart speakers, home Appliances and the phones that listen to commands to act on them.

However, her Alexa voice assistants Amazon or Apple's Siri, represent the overwhelmingly white, male developers who appear to have racial biases.

For example, if you have a strange accent, or your native language is not the English, chances are you'll never get what you're asking for.

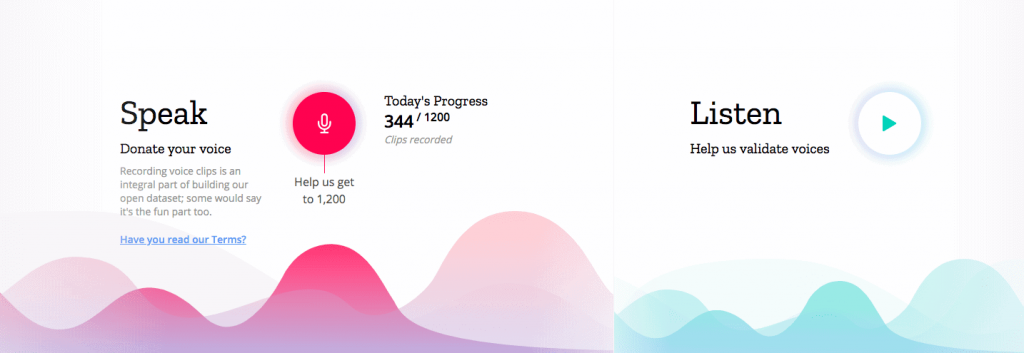

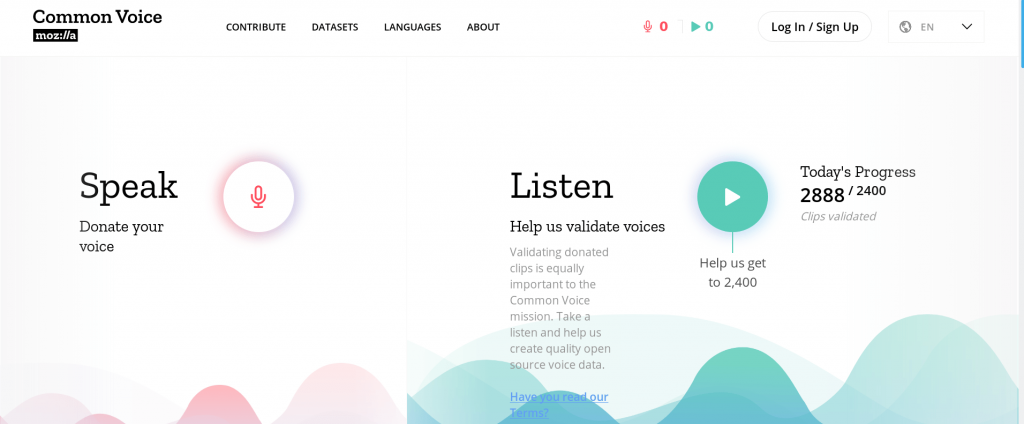

To address this, Mozilla, a free software community, created Common Voice in 2017, a tool that gathers voices as data sets to create a different AI representing the world's population, not just the West.

Common Voice works by publicly releasing an ever-increasing dataset. So every company can use this data to research, create and train their own voice applications, improving voice recognition for everyone, regardless of language, gender, age or pronunciation.

Currently, there are more than 2.400 hours of voice data and 29 languages (English, French, German, Chinese, and Kabyle.)

"Existing speech recognition services are only available in cost-effective languages," Kelly Davis, Mozilla's head of Machine Learning, told TNW.

Speech is beginning to become the preferred way to interact with technology, and this has been helped by the development of news services from Amazon (Alexa) and Google with Google Assistant.

Αυτοί οι φωνητικοί βοηθοί έχουν ανατρέψει τον τρόπο με τον οποίο επικοινωνούμε με την τεχνολογία, ωστόσο, η καινοτόμος δυναμική αυτής της τεχνολογίας είναι ευρέως ανεκμετάλλευτη, επειδή οι προγραμματιστές, οι researchers και οι νεοσύστατες επιχειρήσεις σε όλο τον κόσμο που ασχολούνται με την τεχνολογία αναγνώρισης φωνής αντιμετωπίζουν ένα πρόβλημα: την αδυναμία παροχής φωνητικών δεδομένων σε πολλές γλώσσες για την education of speech-to-text engines," explains Davis.

Although Davis believes that AI is beginning to improve, though slowly, they are far from where they need to be. At the end of 2017, Amazon he added an Indo-English pronunciation to Alexa, allowing her to pronounce Indian phrases and understand some Indian voice shades.

But the voice assistant is still very much in the West, as six of the seven languages he uses are European.

In early 2018, Google announced support for Hindi to its voice assistant, but the feature was limited to a few questions. A few months after its initial release, Google updated the feature so that Google Assistant can now chat in Hindi - the third most widely spoken language in the world.

"Efforts to bridge the AI gap have largely fallen into the hands of non-partners," Davis said.

For example, the project Black In AI, looking for ways to integrate non-Western voice features into AI, was started by former Google employees at 2017.

However, it did not begin as a formal extension of the company's work. It began to address what they saw as a primary need in the community.

Davis claims that there is little benefit from voice recognition technology right now.

"Think about how speech recognition could be used by minority language speakers to allow more people to access the technology and services that the internet can offer, even if they have never learned to read."

"The same goes for the visually impaired or the disabled, but today's market does not seem to be able to help them."

The Common Voice project hopes to accelerate the process of data collection in all languages and around the world, regardless of pronunciation, gender or age.

"By making this data available - and developing a speech recognition mechanism (the Deep Speech project) we can empower entrepreneurs and communities to bridge the gaps," Davis added.

If you want to help differentiate Common Voice project voice recognition, make a recording and try to read suggestions or listen to other recordings. Then, just verify that they are accurate.

__________________________

- Microsoft: Multi-factor authentication 99,9% protection

- Windows 10 19H2 Update System tests started

- Windows 7 Extended Free Support for Some

"Existing speech recognition services are only available in languages that are economically profitable"

COMPLETELY ANTI-RACIST…