What is and how does deep learning work within the neural networks that fuel today's technical intelligence?

Artificial intelligence (AI =Artificial Intelligence) is a revolution in technology today, and the term implies the ability of a machine to reproduce cognitive functions of a person, such as learning, planning and creativity.

Artificial intelligence makes machines able to "understand" their environment, to solve problems and act towards achieving a specific goal.

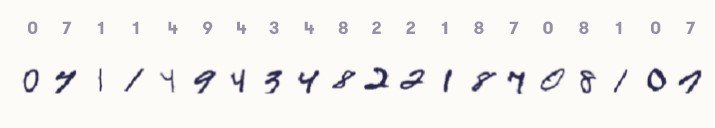

For example, the computer must understand the number of each handwritten symbol above. Do you find the problem simple and the answers correct? Some of the "correct" answers are questionable. See for example the second image from the left: is it really 7, or is it 4?

Here we move away from simple algorithms and enter something bigger that can meet the above requirement. The computer receives data (already prepared or collected through sensors, eg camera), processes it and responds based on its "experience" to handwritten texts. But how is this done?

Going beyond the original, simplistic methods of achieving artificial intelligence, technology now focuses on a technique called deep learning, which is powered by artificial neural networks.

Deep learning, neural networks? Strange words and meanings. The following is a graphical explanation of how these neural networks are structured and trained.

Definitions - Concepts

Machine Learning

Machine Learning in computers explores the study and construction of algorithms that can learn from data and make predictions about it. Machine Learning is applied to a number of computational tasks, where both the design and the explicit programming of algorithms is impossible.

Thus algorithms in machine learning they do not tell the computer exactly what to do, but operate by constructing models from experimental data, in order to make predictions based on data or to make decisions that are expressed as the result.

Examples of applications are spam filters, optical character recognition (OCR), search engines, and computational vision.

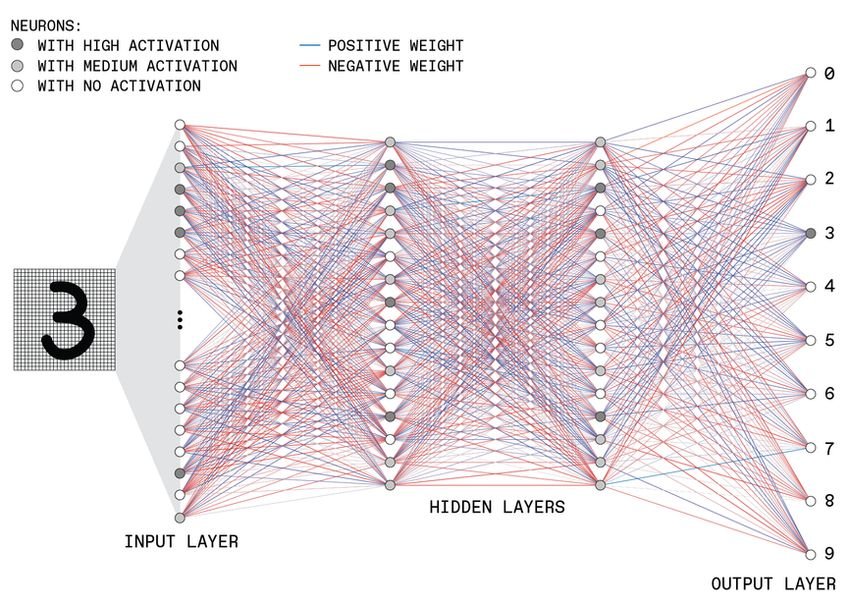

Neural network architecture

Each neuron in an artificial neural network sums its multiple inputs and applies a function to determine its output. This architecture is inspired by what happens in our brain, where neurons transmit signals to each other through synapses.

Here is the structure of a hypothetical deep neural network ("deep" because it contains multiple hidden levels). This example shows a network that reads and interprets an image that contains a handwritten number and ranks it as one of the 10 most likely numbers.

The input layer contains many neurons, each of which has activated a gray scale value of a pixel on the image. These input neurons connect to the neurons of the next layer, transmitting their activation level after they multiply by a certain value, called weight. Each neuron in the second layer adds its many inputs and applies a function to determine its output, which is fed forward in the same way.

Deep Learning

Deep Learning is a machine learning technique (there are many techniques that make up machine learning) in which several "levels" of simple processing units are connected to a network, one behind the other, resulting in the entry into the system passes successively through each of them.

This architecture was devised by modeling the processing of visual information in the brain, i.e. information that enters through the eyes and is captured by the retina, travels through the optic nerve and reaches the brain.

This depth allows the network to learn more complex structures, without the need for large, unrealistic amounts of data.

Education

This kind of neural network is trained calculating the difference between her realoutput and desired output (when of course we know in advance the right result).

The problem of mathematical optimization has as many dimensions as the regulated parameters in the network, especially the weights of connections between neurons, which can be positive [blue lines] or negative [red lines].

Network training essentially finds a minimum of this multidimensional function of "loss" or "cost". This is done repeatedly on many training routes, gradually changing the state of the network.

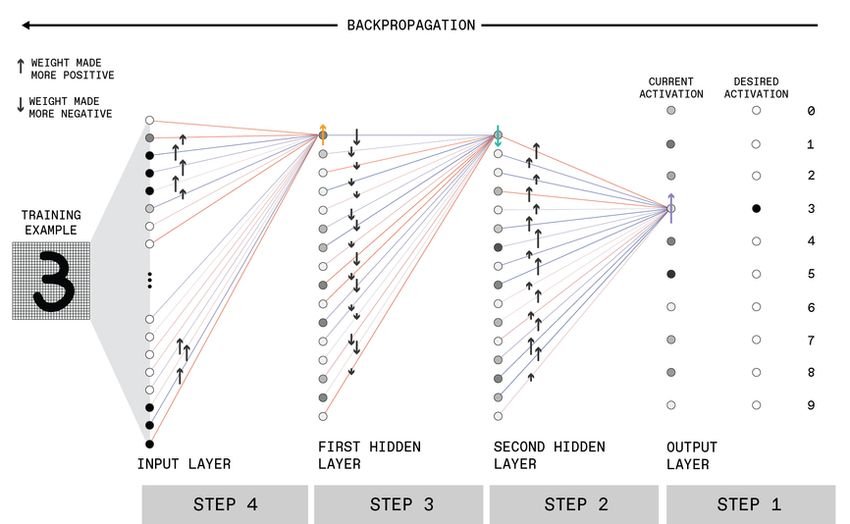

In practice, this implies many small weight adjustments of the network based on the outputs calculated for a random set of input examples, each time starting with the weights controlling the output level and moving backwards through the network. (Only connections to a single neuron in each layer are shown here, for simplicity.)

This playback process is repeated in many random training instances until the loss function is minimized and the network then provides the best results it can for any new introductions.

We understand that you may be a little confused, so let's look at the above example a little more explanatory. We train the computer to learn to distinguish the number 3 which is effortlessly written by hand.

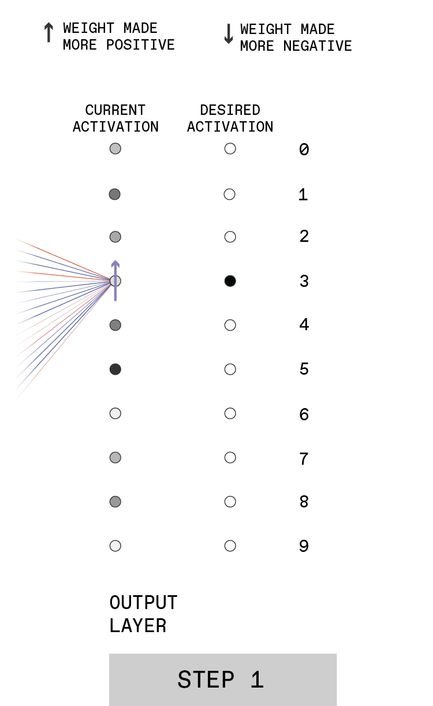

STEP 1

|

When presented with a handwriting “3” at the input, the output neurons of an untrained network will have random firings. The desire is for the output neuron associated with 3 to have high activation [dark shading] and the other output neurons to have low activations [light shading]. Thus, the activation of the neuron associated with 3, for example, should be increased [mov arrow]. |

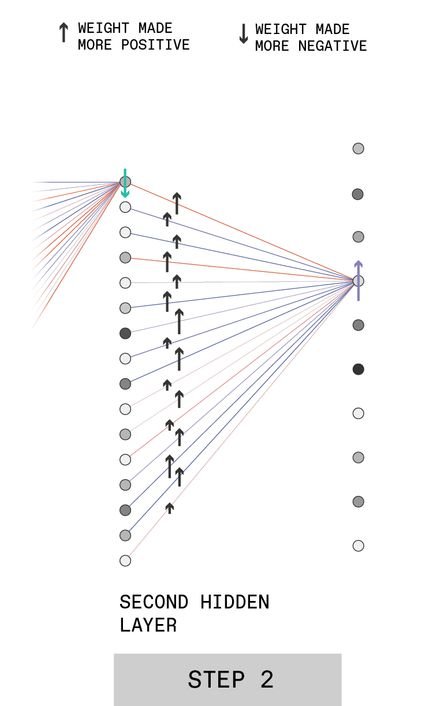

STEP 2

|

To do this, the weights of the connections from the second hidden layer neurons to the output for digit "3" must be made more positive [black arrows], with the magnitude of the change being proportional to the activation of the connected neuron. |

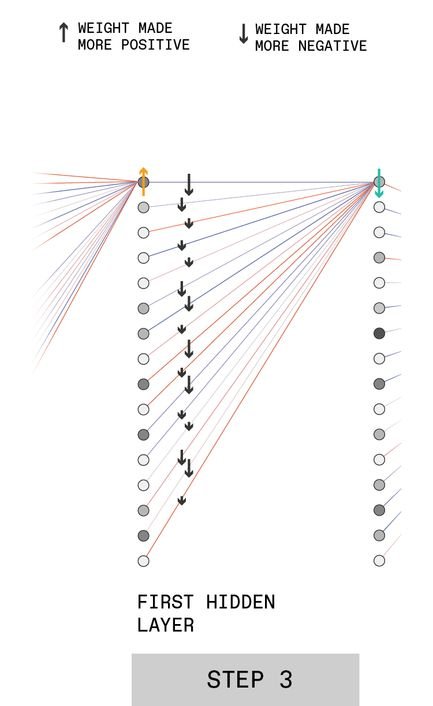

STEP 3

|

A similar process is then performed for the neurons in the first hidden layer (remember that we are training the steps backwards).

For example, to make the network more accurate, the upper neuron at this level may need to reduce its activation [green arrow]. The grid can be pushed in this direction by adjusting the weights of its connections to the first hidden layer [black arrows]. |

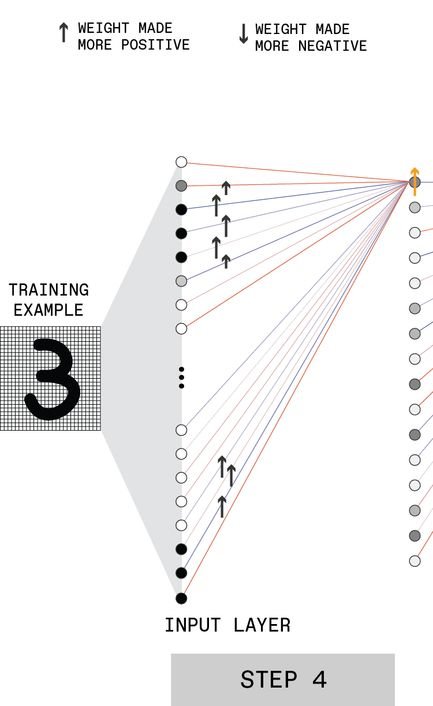

STEP 4

|

The process is then repeated for the hidden layer after entry. For example, the first neuron in this layer may need to have increased activation [orange arrow]. |

By adjusting the weights of the neurons in this way we essentially configure the number 3 recognition algorithm and train the computer to be able to recognize any other 3 given to it later.