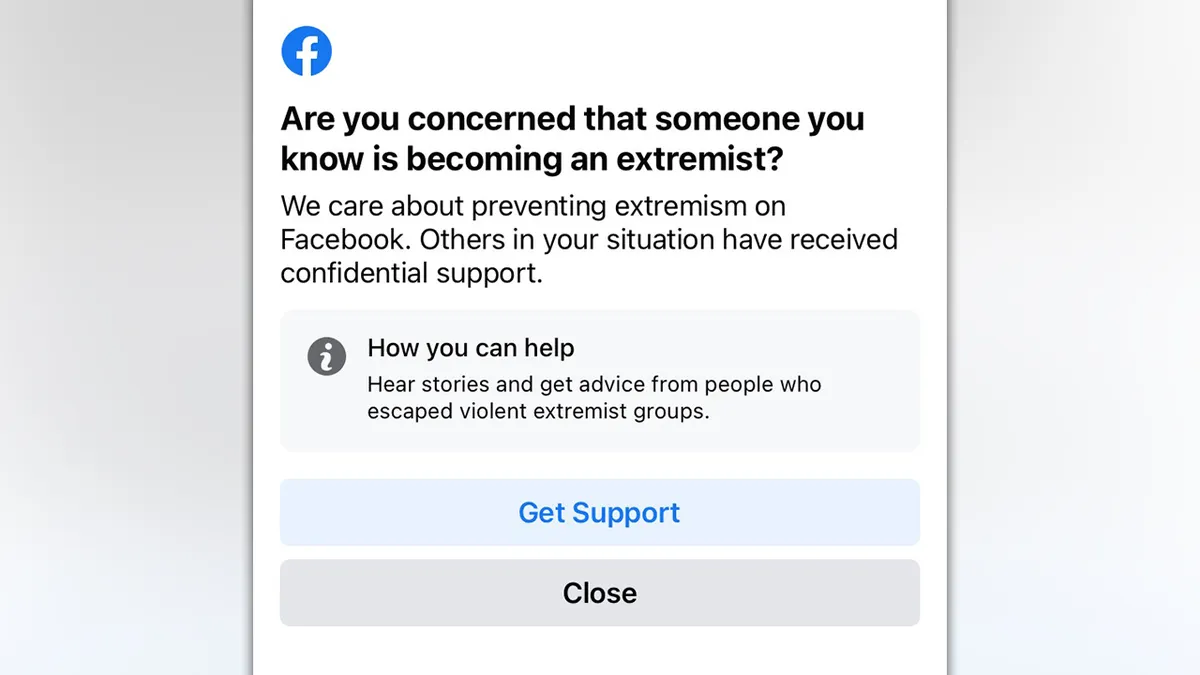

Facebook is starting to warn some users who may have seen "extremist content" on the social network, the company said on Thursday.

The snapshots screen which were shared on Twitter they showed a notification asking "Worried someone you know is becoming an extremist?" or “you may have recently been exposed to harmful extremist content.” Both screenshots have links to “download supportς ”.

The largest social network has long been under pressure from lawmakers and civil rights groups to combat extremism on its platforms, including from domestic movements of USA involved in the attack on the Capitol on January 6.

Hey has anyone had this message pop up

- Kira (@RealKiraDavis) July 1, 2021

on their FB? My friend (who is not an ideologue but hosts lots of competing chatter) got this message twice. He's very disturbed. pic.twitter.com/LjCMjCvZtS

Facebook has announced that it will conduct a small test, on its main platform, as a pilot in the United States. The tests will help to develop a global approach to preventing radicalization through its platform.

"This test is part of our larger work to evaluate ways to help and support people on Facebook who may be involved or exposed to extremist content or may know someone who is at risk," said a Facebook spokesman.

"We work with NGOs and academic experts in this field and hope to have more to share in the future."

Facebook said the tests identified both users who may have been exposed to extremist content that violated the rules of the online community and users who had caused problems on Facebook with extremist content.

The company, which has tightened regulations against violent groups and hate speech in recent years, has said it is removing content and accounts that violate its rules as a precaution before other users see it. This content can only be viewed if it is controlled and authorized by the company.

Are you one of those who believe that:

the EU is it a continuation of the Nazis?

€ is the destruction of Greece?

the violation of the Constitution is a daily occurrence by those who evangelize the defense of the EU. ;

the sale of ports, airports, OTE, PPC, Water, railways, National Roads, etc. contradicts the Principles of the Constitution?

Is the obligatory medical act (vaccination in this case) contrary to the ethics of Medicine, but also to the rules of Hippocratic Science?

Greek banks also received guarantees (which converted them into cash), around € 300 billion?

Do the recapitalizations of the banks from 2008 onwards, which reached ~ 180% of their then Assets, make the real estate auctions of the world illegal, unconstitutional and immoral?

does the elit (local and foreign) do what they want, as they want and for as long as they want in our country?

Do "white collars" (doctors, MPs, ministers, bankers) enjoy immunity, while this is contrary to the Constitution?

are the differences between the parties of the Parliament what are the tassels of wheat from wheat?

Has corruption in the State and in the ranks of the "builders", of ELAS, of Urban Planning, hit the ceiling?

Is prostitution and drugs easier to find in every corner of the country?

the State of Welfare and Law is a "beautiful arada" that is contained only in science fiction stories, where the scenes are set in the "cafe the beautiful Greece"?

is Hellenism in absolute compulsion and moral depression, without having dreams for its future?

illegally enter our Country every walnut, from all over the Earth?

A population change is attempted and is taking place in the Territory, since, according to the elit, it does not matter who lives in the "cafe, beautiful Greece" but the existence of cheap and slavish workers?

that the settlement of Greece by immigrants is the most modern and extreme manifestation of the slave trade in the world?

that Macedonia, Alexander the Great, the Aegean and the Aegean were always Greeks and not Skopjans and Turks?

that the Greeks are working (on the orders of their bosses) on ways to give the Aegean to foreign forces as well? (Turkey with the ultimate goal in Germany, where in any case, over time both these countries were the same)?

Turks and Nazis the same shop?

Well, then, CRIMES, the favabook is doing well and is looking for you through the idiots of the System and slave slaves who are waiting for some crumb to fall from their masters' table to eat it (after licking its soles).

Υγ. Guys, if the present is lost, I love you, I appreciate you and I respect you. But most of all, I understand you.

From the bottom of my heart, good morning to every… .terrorist, extreme, extremist, crazy, "sprayed". described above.