Facebook will now warn you with a message if you visit pages that constantly share fake news.

In one more step to fight misinformations on the platform, the Facebook will warn you for pages that repeatedly spread fake news.

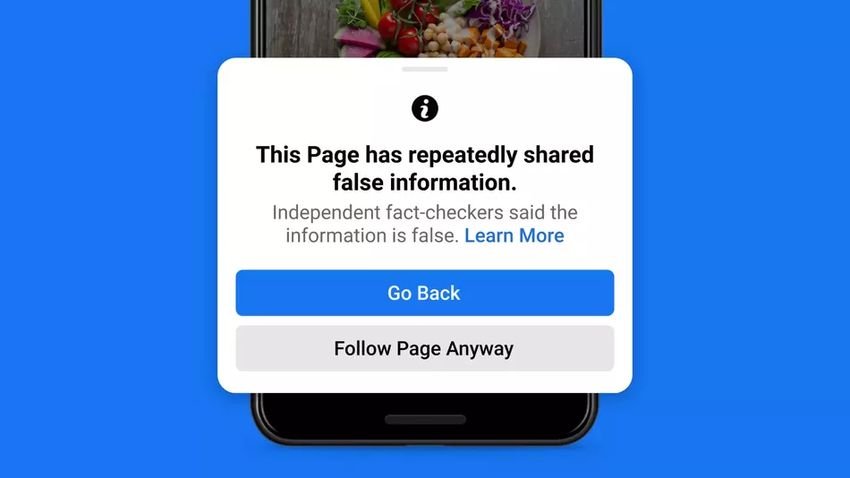

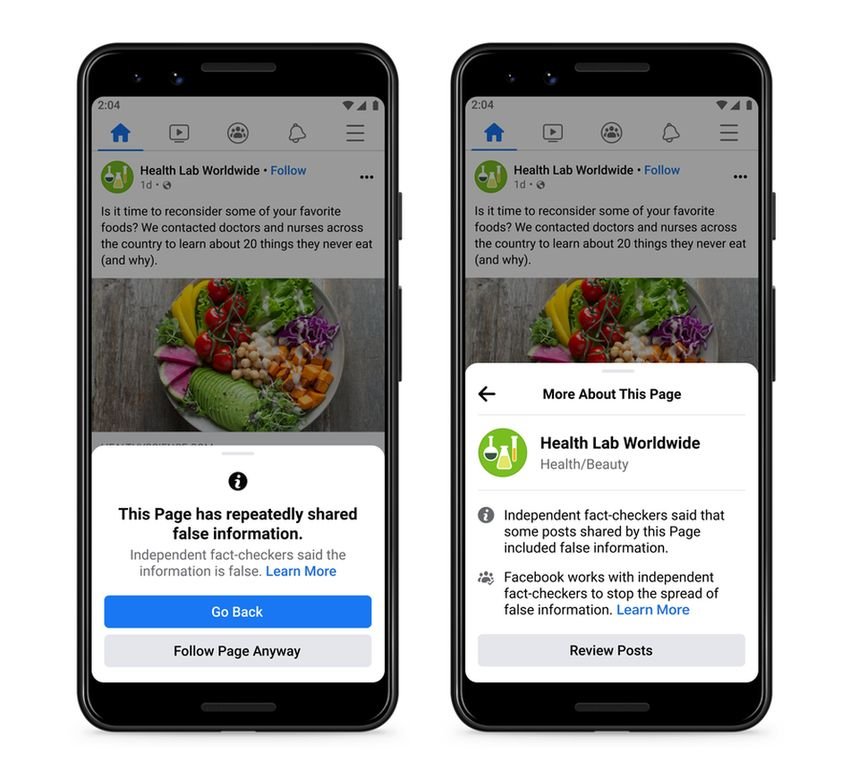

If you try to visit such a page, you will see a pop-up message saying that the page has "repeatedly reported false information" and that "independent news reviewers have stated that the information is false". You will then be given the option to return to the previous page or follow the page anyway.

There will also be one link "learn more", which will provide more information about why this page has been flagged as false, and another link, which will provide more information about Facebook's review program.

The company also said it would extend penalties for individual Facebook accounts that repeatedly share misinformation, meaning other users will see fewer posts from those accounts in the news feed.

Finally, Facebook has redesigned the alerts that appear when users share content that controllers have identified as false. The notification will now include the information controller article, which explains why the post is misleading, along with the notification option of this article. Users will also be notified that posts by users who repeatedly share fake news will be placed low in the news feed, making it less likely for other users to see it.

Over the past two years, Facebook has introduced a number of measures to combat misinformation on the platform. These include introducing message forwarding limits to Messenger, encouraging users to read an article before sharing it, posting warning labels on fake news, the deletion of accounts for misinformation and the most famous, exclusion of Donald Trump from the use of the platform.