Facebook-Cambridge Analytica: In the early years of 2000, Alex Pentland was in charge of the laptop team at the MIT Media Lab. MIT's Media Labs was where the first ideas for Augmented Reality and Fitbit fitness trackers began.

At that time, of course, the Appliances they were huge, since bags were needed to transport them and cameras on the researchers' heads.

"It was basically a bunch of cell phones that we had to glue together," Pentland tells Wired. But the hardware was not the most important comeye, as far as the ways of interaction between the devices were concerned.

"We were able to see all the people on earth," says the researcher - where they went, and what they bought.

So, until the mid-decade, when people started subscribing to social networks like Facebook, Pentland and social scientists began looking at network and mobile data to see how viral publications expand, how they connect friends among themselves, and how political alliances are formed.

"We inadvertently invented a particle accelerator to understand human behavior," said David Lazer, a Harvard political scientist.

"It was understood that everything was changing in terms of understanding human behavior." So at the end of 2007, Lazer and Pentland held a conference entitled "Computational Social Science" analyzing what Big Data says.

In early 2009 the participants of this conference published a study-statement of principles in the prestigious journal Science. (PDF)

The publication has provided the Facebook-Cambridge Analytica scandal, which exploited the online behavior of millions of users, to better understand the personalities and preferences of these users. Exploiting this knowledge has been able to influence the US elections.

"These vast, emerging data on how people interact certainly offer qualitatively new perspectives on understanding collective human behavior," the researchers write.

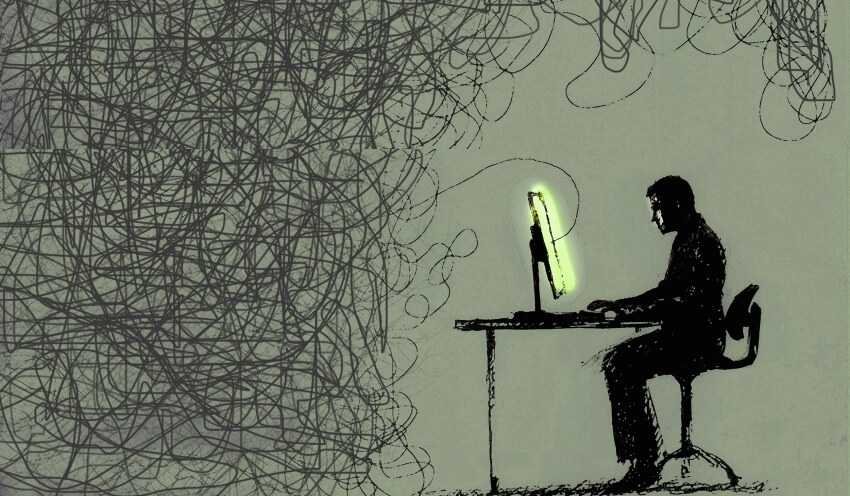

But all this emerging understanding came with dangers.

"Perhaps the most thorny challenges to the data side are access and privacy," the study said.

"Because a single dramatic privacy breach could create rules and regulations that stifle the emerging field of computer social science, self-regulation of processes, technologies and rules that reduce this risk but maintain research momentum is required. ”

Perhaps even more worrying than Cambridge Analytica has tried to make the results of the election (which many thought was not feasible) is the role of scientists in facilitating moral harm.

Scientists, although initially warned us about Big Data and the upcoming corporate supervision, on the other hand the social sciences saw the opportunity to gain prestige.

"Most of what we think we know about humanity is based on a few facts and is therefore not a strong science," said Pentland, author of the 2009 study.

Data and computational social science promised to change that. Data produces science, which not only quantifies it but can also calculate what is to come. Other sciences do it for the αστέριa the DNA and and the electrons. The social sciences cannot (or rather could not) do this for humans.

Then it became a truly quantum leap.

Observation and prediction lead to the ability to intervene on a system. It is the same progress that results from the understanding of heredity through the observation of DNA sequences.

So Cambridge Analytica was able to use computational social science to influence behavior. Cambridge Analytica said it could do it and apparently deceived to acquire the data.

It was the disaster that the 2009 study authors had warned.