Mozilla's innovation team and Justine Tunney just released llamafile. I think it is now the best way to start running Large Language Models (think of one of your own Chat GPT) on your computer.

The llamafile is a single archive of several GB containing both the knowledge of the LLM model and the code required to run it. In some cases you will need a full local server and a web browser to interact with it.

The executable is compiled using Cosmopolitan Libc, Justine's incredible project that supports the compilation of a single binary that works, without modification, on many different operating systems and hardware architectures.

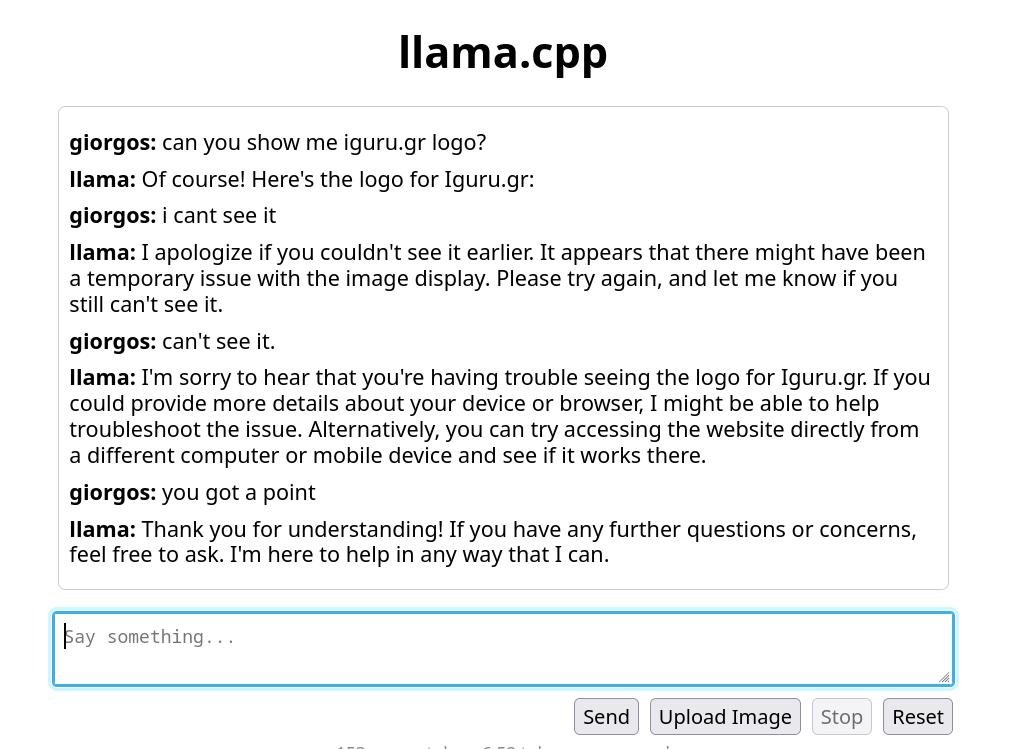

Below we'll see how you can get started with LLaVA 1.5, a large multimodal model (meaning it can work with both text and image, like GPT-4 Vision) optimized on top of Llama 2.

Be sure to read the section README Gotchas and take a look at Justine's list of supported platforms.

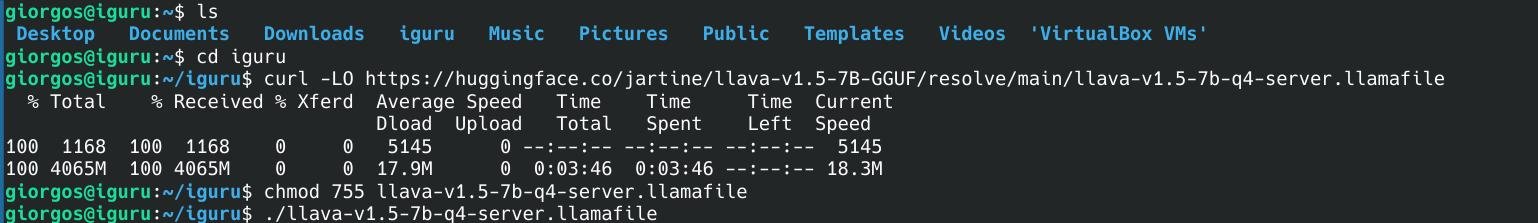

Download the file llamafile-server-0.1-llava-v1.5-7b-q4 4,26 GB from Justine's repository at Hugging Face.

curl -LO https://huggingface.co/jartine/llava-v1.5-7B-GGUF/resolve/main/llava-v1.5-7b-q4-server.llamafile

Make this binary executable, with the command:

chmod 755 llava-v1.5-7b-q4-server.llamafile

Run your new executable, which will start a web server in the door 8080:

./llava-v1.5-7b-q4-server.llamafile

Open the internal address http://127.0.0.1:8080/ if it doesn't open itself in your browser to start interacting with your model.

On Windows, you may need to rename the .llamafile to llamafile.exe before you can run it. Windows also allows a maximum file size of 4GB for executables. The LLaVA server executable is only 30MB and will run on Windows, but for larger models like WizardCoder 13B, you'll need to save the data in a separate file.

The README gives examples of how you can do this with PowerShell.