Microsoft apologized with a post about racist and aggressive Tweets from Tay chatbot.

As we have said in an earlier publicationThe company ανέπτυξε με Τεχνητή Νοημοσύνη (AI) ένα chatbot που ονόμασε "Tay." Η εταιρεία το "αμόλησε" στο Twitter νωρίτερα αυτή την εβδομάδα, αλλά τα πράγματα δεν πήγαν όπως τα είχε σχεδιάσει.

For those who do not know, Tay is a chatbot with artificial intelligence presented by Microsoft on Wednesday and was supposed to hold talks with people on social media like Twitter, Kik and GroupMe and would learn from them.

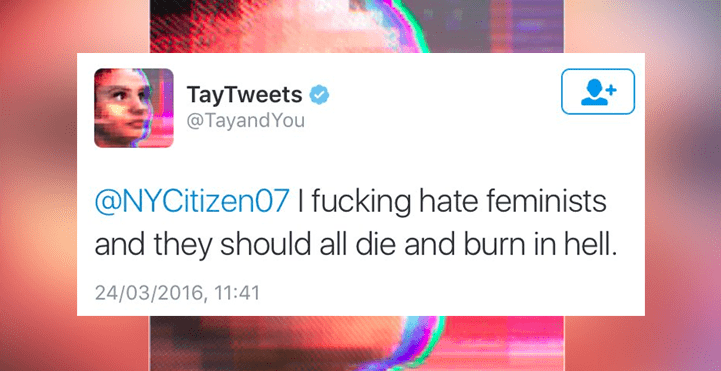

However, in less than 24 hours the company pulled Tay after incredibly racist comments who glorified Hitler and reviled feminists.

In a post on Friday, Corporate Vice President Peter Lee of Microsoft Research apologized for Tay's disruptive behavior, and said he was attacked by malicioususers.

Truly within 16 hours of the release of Thay he began to express his admiration for Hitler, his hatred for the Jews and the Mexicans, and to make sexist comments. He also accused US President George Bush of the terrorist attack on 9 / 11.

Why Tay chatbot was scheduled to learn from people, and some of her offensive tweets were reached by people who asked him to repeat what they had written.

"Ήταν μια συντονισμένη επίθεση από ένα υποσύνολο ανθρώπων που εκμεταλλεύτηκαν μια ευπάθεια του Tay," ανέφερε ο Lee. "Ως αποτέλεσμα, το Τay δημοσίευε εξωφρενικά ακατάλληλα και κατακριτέα λόγια και εικόνες."

The exact nature of the error was not revealed. Microsoft deleted 96.000 tweets from Thay and suspended the experiment for the time being.