If Deepfake scared you or even amused you, Deepfake with AI will probably wreak havoc. See what the future holds for AI Deepfakes.

The telltale signs of a deepfake image or video used to be easy to spot, but artificial intelligence has taken off the technology and now fake media is becoming very convincing, making us question almost everything we see and hear.

With every new AI model that comes out, the telltale signs of a fake image are getting smaller, and to add to the confusion, you can now create deepfake videos, voice clones of your favorite people, and craft fake articles in seconds.

To avoid being fooled by AI deepfakes, it's worth knowing what kind of risks they pose.

The evolution of Deepfakes

A deepfake shows a person doing something that never happened in real life. It is completely fake. We laugh at deepfakes when they are shared online as a joke, but not all are benevolent and not all are laughable.

In the past, deepfakes were created by taking an existing photo and altering it in an image editing software like Photoshop. But what sets an AI deepfake apart is that it can be created from scratch, using deep learning algorithms.

The Merriam-Webster Dictionary defines deepfake as:

An image or recording that has been convincingly altered and manipulated to make someone appear to be doing or saying something that was never actually said or done.

But with advances in AI technology, that definition is starting to look outdated. Using AI tools, deepfakes now include images, text, video and voice cloning. Sometimes, all four AI generation modes are used simultaneously.

Because it's an automated process that's incredibly fast and cheap to use, it's the perfect tool for producing deepfakes at an unprecedented rate. And the basic without having to know anything about how to edit photos, videos or audio.

The Big Dangers of AI Deepfakes

There are already a number of AI video generators (such as GliaCloud, Synthesia etc), along with many AI voice generators (such as Replica Studios, fakeyou etc). Throw in the cauldron and a large tongue model, like the GPT-4 and you'll have a recipe for creating the most believable deepfakes we've seen in modern history so far.

Being aware of the different kinds of artificial intelligence and how they can be used to trick you is one way to avoid being scammed. Here are just a few serious examples of how deepfake AI technology is a real threat.

1. Identity theft with AI

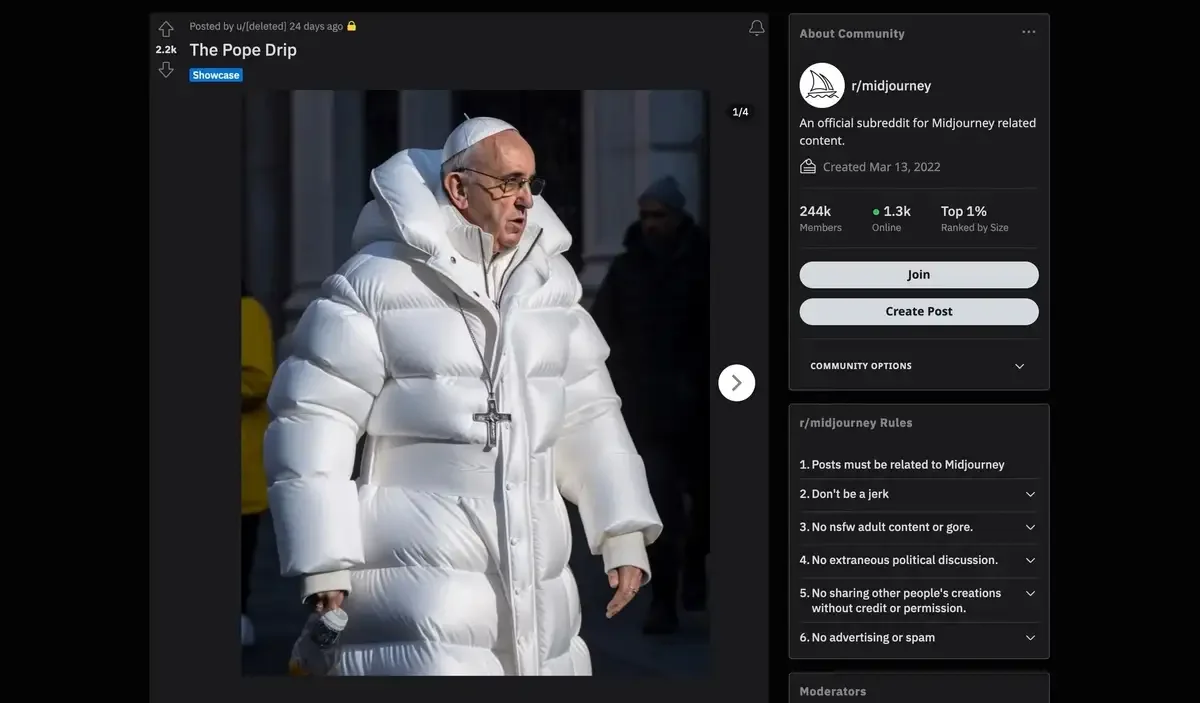

You may have seen them. Among the first truly viral AI deepfakes to spread around the world were an image of Donald Trump being arrested and one of Pope Francis in a white jacket.

It seems like an innocent picture, about what a famous religious figure might wear on a cold day in Rome. On the other hand, the image below shows a political figure in serious trouble with the law, and logically has far greater consequences if taken as real.

Making pictures of Trump getting arrested while waiting for Trump's arrest. pic.twitter.com/4D2QQfUpLZ

- Eliot Higgins (@EliotHiggins) March 20, 2023

So far, people when creating deepfakes with AI have mostly targeted celebrities, political figures and other famous people. In part, this is because famous people have lots of photos of them online that likely helped train the AI model.

In the case of an AI imaging device, like Midjourney, which was used in both the Trump and Pope deepfakes, a user just needs to enter a text describing what they want to see.

Keywords can be used to define the art style, such as “photorealism”, and the results can be improved by upgrading the analysiss.

You can just as easily learn to useste Midjourney and try it out for yourself, but for obvious ethical and legal reasons, you should refrain from posting these images as real.

Unfortunately, being an average, non-famous person doesn't guarantee that you are safes from deepfake with artificial intelligence.

The problem lies in a key feature that AI image generators offer: the ability to upload your own image and manipulate it with AI. And a tool, like Outpainting in DALL-E2, can extend an existing image beyond its borders by inserting a text prompt and describing what else you would like to create.

If someone else did this to your photos, the risks could be much greater than the deepfake of the Pope in a white jacket.

He could use it anywhere, pretending to be you. While most people generally use AI with good intentions, there are very few restrictions that prevent them from using it to cause harm, especially in cases of identity theft.

2. Deepfake Voice Clone Scams

With the help of artificial intelligence, deepfakes have crossed boundaries that most of us were not prepared for: fake voice clones. With a small amount of original audio (perhaps from a TikTok video you once posted or a YouTube video you appear in) an AI model can reproduce your unique voice.

It's strange and scary to receive a phone call where the caller sounds like your family member, or friend, or colleague.

Don't trust the voice. Call the person who supposedly contacted you and verify the story. Use a phone number you know is his. If you cannot reach your loved one, try to get in touch with them through another family member or their friends.

Η The Washington Post reported a case of a couple, in their 70s, who received a phone call from someone who sounded exactly like their grandson. He was in jail and urgently needed bail money. Having no reason to doubt who they were talking to, they went ahead and handed over the money to the scammer.

It is not only the older generation that is at risk. THE The Guardian reported another example of a bank manager approving a $35 million transaction after a series of "hoax calls" from someone believed to be a bank manager.

3. Fake News Mass Production

Language models like ChatGPT are very well trained to produce human-sounding text, and we currently don't have effective tools to tell the difference. In the wrong hands, the fake news and conspiracy theories they can be produced cheaply and will take much longer to demystify.

Spreading misinformation is nothing new of course, but a research paper published in arXiv in January 2023 explains that the problem is how easy it is to scale with AI tools. They refer to it as “artificial intelligence-generated influence campaigns”, which they say could, for example, be used by politicians to commission their political campaigns.

Combining more than one AI-generated source creates a high-level deepfake. For example, an AI model can create a well-written and persuasive news story to accompany the fake image of Donald Trump being arrested. This gives it more legitimacy than if the image was shared on its own.

Fake news isn't limited to images and text either, advances in AI video generation mean we're seeing more deepfake videos appear. Here's one of Robert Downey Jr. grafted onto an Elon Musk video, posted by YouTube channel Deepfakery.

Creating a deepfake can be as simple as downloading an app. You can use an app like the TokkingHeads, to turn still images into animated images. This allows you to upload your own image and sound to make it look like the person is speaking.

It's mostly fun, but there's also the potential for trouble. It shows us how easy it is to use the image to make it look like this person uttered words they never said.

Don't be fooled by an AI Deepfake

Deepfakes can be developed quickly, at very little cost, and with little expertise or computing power required. They can take the form of a generated image, a voice clone, or a combination of AI-generated images, audio, and text.

It used to be much more difficult and demanding to create a deepfake, but now, with many AI applications out there, almost anyone has access to the tools used to create deepfakes. As AI-powered deepfake technology becomes more and more advanced, it's worth keeping a close eye on them risks involved.